Filter out classes from JaCoCo report using annotaitons

Story

Engineering culture at scale is difficult to build and even harder to maintain. In our company, we made a deliberate decision to enforce code coverage as a merge requirement — a hard gate on the CI pipeline that prevented branches from being merged unless they met a minimum threshold. We chose 80%: every new or modified class had to be covered by unit tests to at least that level.

There are many debates about what the right coverage threshold is, whether coverage is a useful metric at all, and how to avoid gaming it. This article is not about that.

The policy had real effects. Teams began writing more testable code. Architecture improved — highly coupled, untestable code tends to resist coverage requirements in ways that become impossible to ignore. App stability measurably increased. The discipline around testing became part of how we worked, not an afterthought.

But we quickly discovered a class of code that was untestable not through neglect or poor design, but by its nature:

- Legacy code with deeply tangled dependencies, thousands of lines that would require months of refactoring before tests could be written — refactoring that the business would not prioritize because “it works”

- Auto-generated code — output from annotation processors, AIDL interface bindings, generated Room DAOs, Dagger components — code that is correct by construction and pointless to re-test

- Delegating code that exists only to forward calls and has no logic to verify

These classes needed to be excluded from coverage calculations so that teams could meet the threshold on the code they actually owned and controlled.

Then we migrated from Java to Kotlin, and the problem became significantly more complex.

The Kotlin Bytecode Problem

JaCoCo operates at the bytecode level. It instruments compiled .class files by injecting probes that track which lines and branches have been executed. This works reliably for Java code, where the relationship between source lines and bytecode is relatively straightforward.

Kotlin is different. The language is designed to eliminate boilerplate, and it achieves this by having the compiler generate significant amounts of bytecode from compact source constructs. A few examples:

- A simple

data classwith three fields generatesequals,hashCode,toString,copy, and threecomponentNfunctions — all as fully-realized bytecode methods that JaCoCo counts independently. - A safe call

s?.foo()compiles to a null check (if/elsebranch in bytecode), even though there is no explicit conditional in the source. - A

lateinit varfield access generates a null check that appears as an uncovered branch if the test path never triggers the uninitialized case. - Companion objects, object declarations, and sealed class subclasses all generate additional bytecode scaffolding.

The result: coverage numbers for Kotlin projects are systematically lower than the source code suggests. A data class that any developer would consider fully tested — constructors called, methods invoked — shows gaps because JaCoCo is counting generated bytecode that has no corresponding source line for tests to exercise. For a large Kotlin project, this distortion can move the effective coverage percentage by 5–15 points.

When coverage is a KPI, or when it gates merges in an automated pipeline, that distortion has real consequences.

Approaches We Explored

1. Custom Gradle task with filename filters

The standard approach, and the first thing any team discovers when searching for this problem, is to define an exclusion list in the JacocoReport Gradle task:

task jacocoTestReport(type: JacocoReport, dependsOn: [...]) {

// filter rules

def fileFilter = [

'**/R.class',

'**/R$*.class',

]

def debugTree = fileTree(

dir: "${buildDir}/intermediates/classes/debug",

excludes: fileFilter // <-- exclude listed classes

)

classDirectories = files([debugTree])

// some code...

}

This works for known, predictable class names — R.class, generated Dagger classes, known suffixes. The exclusion list lives in one place and is easy to audit.

The problem is that glob patterns applied to class names are brittle. In one memorable incident, a rule intended to exclude R.class and its nested classes (**/R$*.class) was written as **/R*.class — a subtle difference that caused JaCoCo to silently exclude every class whose name began with “R”. This went unnoticed for weeks, producing a coverage report that was technically generated but factually wrong.

Glob-based exclusion also creates an organizational problem: the list of excluded classes is in the build configuration, disconnected from the source files. There is no mechanism to detect when an exclusion entry becomes stale because the class it targeted was renamed or deleted. The list tends to accumulate cruft over time.

2. Annotation processor

A colleague proposed a more elegant solution: create a @NoCoverage annotation and an annotation processor that reads it at compile time, collects all annotated class names, and writes them to a file. The Gradle task would then read that file as its exclusion list instead of a hardcoded array.

This was genuinely clever. The exclusion list becomes self-maintaining — developers annotate the classes they want excluded, and the configuration stays in sync with the source automatically.

The practical limitation is a phase mismatch. Annotation processors run during Kotlin/Java compilation. The JacocoReport Gradle task runs after compilation, during test execution and report generation. Coordinating the output of the annotation processor as an input to the Gradle task requires explicit task dependency wiring that is fragile across Gradle versions, build types, and module boundaries. In a multi-module project, the complexity becomes significant.

APT was designed to generate code, not to produce configuration consumed by external Gradle tasks. Using it for this purpose works, but it fights against the tool’s intended grain.

3. Forked Kotlin compiler

We learned that the JaCoCo team had added a special filter for Lombok — a Java code generation library — that suppressed coverage counting for methods annotated with @Generated. This made perfect sense: Lombok-generated code, like Kotlin-generated code, should not require test coverage.

A colleague proposed the obvious extension: if JaCoCo respects @Generated on methods, and if the Kotlin compiler generated that annotation on its auto-generated methods (data class helpers, etc.), the problem would be solved at the source.

The Kotlin compiler does not do this. So the colleague forked the Kotlin compiler and modified it to add @Generated annotations to every compiler-generated method.

This is remarkable. Forking a programming language’s compiler to solve a tooling problem is a drastic step, and it demonstrates both the severity of the issue and the depth of knowledge required to address it at that level. The forked compiler was never used in production — the maintenance burden would be untenable — but the experiment proved the concept worked.

Subsequently, JaCoCo added its own filter for @kotlin.Metadata, which covers some Kotlin-generated constructs. But this did not fully solve the problem for all generated code in Kotlin projects.

Solution: Annotation + Kotlin typealias

While reading JaCoCo’s source code to understand what filters were available, I discovered something significant. The AnnotationGeneratedFilter — the filter originally added for Lombok support — had been changed from an exact name match to a substring match. Specifically, the annotation’s simple class name only needs to contain the word “Generated”.

This means that any annotation whose class name contains “Generated” will cause JaCoCo to exclude the annotated element from coverage analysis. We do not need to use javax.annotation.Generated. We can create our own annotation with an appropriate name:

@Retention(AnnotationRetention.BINARY)

annotation class NoCoverageGenerated

typealias NoCoverage = NoCoverageGenerated

// or just

typealias NoCoverage = Generated // from javax.annotations package

The typealias is a small but meaningful touch. At the bytecode level, NoCoverage is transparent — the JVM sees NoCoverageGenerated, which contains “Generated” and triggers the JaCoCo filter. At the source level, @NoCoverage is readable and clearly expresses the developer’s intent: this class is intentionally excluded from coverage.

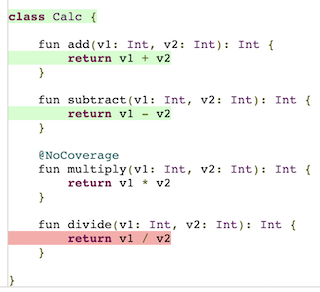

Applying it is simple:

@NoCoverage

data class GeneratedEntity(val id: Int, val name: String)

JaCoCo will skip this class entirely in its coverage calculations.

Pros:

- No build configuration changes

- No code generation

- Exclusion stays co-located with the source it applies to

- Works correctly across modules and build variants

Cons:

- This is a workaround. The JaCoCo wiki explicitly states:

Remark: A Generated annotation should only be used for code actually generated by compilers or tools, never for manual exclusion.

- The annotation is present in the compiled artifact. In Android projects using ProGuard, R8, or DexGuard for release builds, annotations with

BINARYretention are stripped during optimization, so there is no production impact. For projects that do not use code shrinking, the annotation remains in the release artifact but has no runtime effect.

In practice, the constraint from the JaCoCo wiki reflects an ideal, not a reality. Large Kotlin projects contain genuinely untestable code — legacy code under business constraints, transitional code mid-refactor, structural code that is correct by construction. Teams need a practical mechanism to handle these cases. The annotation approach provides that mechanism with minimal overhead and maximal clarity at the point of use.

This article was originally posted on Medium.